Google's March 2026 Core Update completed its rollout on April 8, 2026 — and it has reshuffled rankings more aggressively than any recent update. If your organic traffic changed significantly after March 27, this guide explains what happened and what to do next.

2026 Google Algorithm Update Timeline

- Feb 5 – Feb 27, 2026: Google Discover Core Update

- March 2026: March 2026 Spam Update

- March 27 – April 8, 2026: March 2026 Broad Core Update ← Primary focus

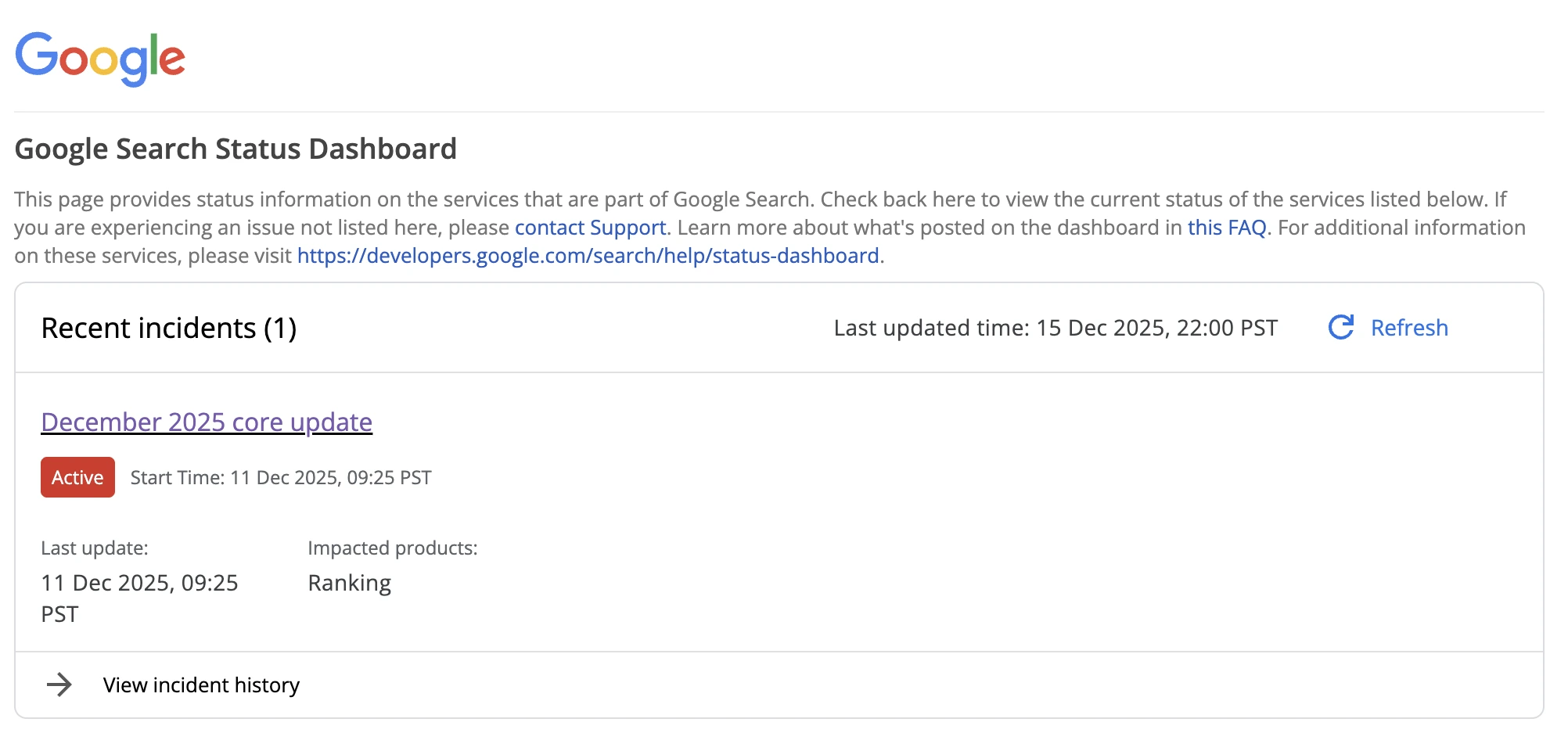

- December 11–29, 2025: December 2025 Core Update (Previous)

Google's first-ever standalone Discover update, running 21 days. Initially limited to English-language users in the United States, with global expansion planned. Distinct from traditional core updates - it targeted the Discover feed without simultaneously affecting web search.

A targeted spam enforcement action completed two days before the core update began. Sites with scaled, low-value, or manipulative content patterns were flagged before the broader quality recalibration.

The first broad core update of 2026. Google described it as a "regular update designed to better surface relevant, satisfying content for searchers." It applied globally across all languages and content types, taking 12 days to complete fully.

The predecessor update that ran 18 days. Focused on content quality, E-E-A-T, and integration of the Helpful Content system into core ranking. Many sites are still recovering from this update.

What Changed in the March 2026 Update

Google did not introduce entirely new ranking systems. Instead, it refined how existing systems evaluate content comparatively — your pages are measured against competing pages for the same query, not against an absolute checklist.

The four key shifts

- Information gain over volume: Pages that add genuine new insight, data, or perspective outperform longer pages that rehash existing content with different wording.

- Intent alignment as the primary filter: Exact-match keywords matter less than whether the page accurately fulfills the user's real goal behind the query.

- Harder line on AI content without added value: Mass-produced AI content lacking human expertise, original examples, or unique perspective saw some of the steepest drops — traffic losses of 60–90% were reported among the hardest-hit sites.

- Comparative evaluation: Rankings shifted not because sites were penalised, but because competitor pages with better intent alignment and depth displaced them. The update is re-ranking, not punishing.

Volatility & Ranking Shifts

Data from major tracking platforms shows the March 2026 update triggered unusually high movement — higher than the December 2025 update that preceded it:

- Top-3 URLs that shifted: 80%

- Top-10 pages with movement: 90.7%

- Monitored sites with visible changes: 55%+

- SEMrush peak volatility score: 9.5/10

- Top-10 pages dropped below 100: 24%

For context, the December 2025 update shifted 67% of top-3 positions. The March 2026 figure of 80% marks a notable escalation. Sites with strong content quality, technical SEO, and authority signals showed significantly more stability throughout the rollout.

E-E-A-T: Now the Default Standard

E - Experience

Add first-hand examples, screenshots, case studies, and "here's what happened when I tested this" insights. Real experience cannot be faked at scale.

E - Expertise

Descriptive author bios linked to credentials, content tightly focused on your niche, and accurate technical depth across your topic cluster.

A - Authoritativeness

Build focused topic clusters, earn mentions from relevant sites in your space, and keep information current. Breadth without depth weakens authority signals.

T - Trustworthiness

Transparency about monetization, clear contact information, no misleading content. Trust is the floor — without it, the other E-E-A signals cannot lift rankings.

Content Quality Standards After March 2026

This update rewards depth over volume and originality over completeness. Pages padded to hit a word count are losing to shorter, more purposeful pages that help users complete a specific task or decision.

What's working now

- Depth and specificity: Cover subtopics, FAQs, and edge cases while staying tightly focused on intent. Repetitive elaboration signals thin content to the algorithm.

- Original data and frameworks: Your own research, checklists, templates, and commentary add information gain that rephrased rankings cannot match.

- Human-refined AI content: AI-drafted content layered with genuine expertise, examples, and local context is rewarded. Unedited bulk AI output is among the hardest-hit content types.

- Intent-first structure: Lead with a direct answer, expand into scannable sections with descriptive headings. Mobile-first structure correlates with better engagement signals.

What's hurting rankings

- Generic introductions that delay the answer ("In today's digital landscape…")

- Thin topic coverage across a large number of pages (content sprawl without depth)

- AI-generated articles without editing, human examples, or original perspective

- Duplicate or near-duplicate category pages in e-commerce with no unique guidance

Winners & Losers by Sector

Analysis across major SEO tracking platforms and community reports reveals clear patterns in which content models gained and lost ground:

| Common losers | Common winners |

|---|---|

| AI content farms (60–90% traffic loss reported) | Niche authority sites with topical depth |

| Thin affiliate and review sites | Brands combining articles with tools or community |

| E-commerce with duplicated manufacturer descriptions | Original research and first-party data sources |

| Aggregators and syndication-heavy platforms | Sites with detailed author bios and trust signals |

| SMBs in competitive local niches (20–70% drops) | Content with clear user task completion focus |

| Sites with misaligned intent across topic clusters | Strong branded and official website domains |

A notable secondary effect: Google's AI Overviews (AI-generated summaries at the top of results) continue to reduce click-through rates even when underlying rankings hold steady. Traffic redistribution is happening on two fronts — rankings and click-through rates simultaneously.

How to Diagnose Your Site's Impact

The March 2026 update completed on April 8, 2026, so ranking data is now stable enough for accurate diagnosis. Focus on sustained changes rather than daily fluctuations.

Step-by-step diagnosis

- Set your analysis window.

- Check for topic-cluster patterns.

- Rule out the March spam update.

- Benchmark against competitors.

- Eliminate technical causes.

The update ran March 27 – April 8, 2026. Compare your Google Search Console and analytics data from the two weeks before and two weeks after this window.

In Search Console, look at your Queries and Landing Pages reports. Isolated single-page drops are often technical. Entire topic clusters losing visibility suggests a content quality or relevance issue.

If drops appear concentrated on pages with aggressive monetization, link schemes, or scaled low-value content, the spam update (which preceded the core update by two days) may be the primary cause — requiring different remediation.

Did competing pages gain the rankings you lost? If so, compare their content depth, author credentials, and E-E-A-T signals with yours.

Confirm no crawling, indexing, or manual action issues coincided with the update window using URL Inspection and the Coverage report in Search Console.

Recovery Playbook

Google consistently advises that recovery from a core update comes from holistic quality improvement, not single-point fixes. Most meaningful recoveries happen over 3–6 months, often accelerated by smaller rolling core updates that can register your improvements before the next named update.

- Audit and triage by business value

- Merge overlapping or thin content

- Add real experience and original insight

- Strengthen trust infrastructure

- Optimize for task completion, not time-on-page

- Fix Core Web Vitals and mobile friction

- Give changes time and track consistently

Group affected pages by topic cluster and prioritize those with backlink equity or revenue significance. Don't delete thin pages — upgrade them first and measure the response.

Combine near-duplicate articles into a single, comprehensive resource that serves user intent better than any individual page did. A canonical, deep page outperforms five shallow ones.

Layer in case studies, first-hand test results, frameworks, and original data. This is the single highest-impact improvement you can make to E-E-A-T signals.

Improve author bios with real credentials, add clear contact information, ensure monetization is transparent, and update outdated statistics or references throughout affected pages.

Make it easy for users to accomplish their goal quickly. Good task completion reduces pogo-sticking and improves the behavioral signals Google uses as proxy quality indicators.

Technical UX problems compound content quality issues. Address LCP, CLS, and mobile usability alongside content improvements — they're evaluated together, not in isolation.

Monitor weekly in Search Console for at least 3 months. Google crawls and re-evaluates continuously — quality improvements can register before the next named update, especially on pages with strong crawl frequency.

Track the official update status at status.search.google.com and review Google's core update guidance at developers.google.com.